Election Polls, Plinko, and Weather Forecasting

2020-11-09 11:57:13.000 – Nate Iannuccillo, Weather Observer/Education Specialist

Like many people in the United States this past week, I spent my evenings watching election results come in. One of the striking things about this election as well as elections in recent years, was the stark contrast between the predicted results and then what actually happened.

A consistent question that has seemed to come up in this election as well as previous years is “How could the polls be so wrong?”. As a weather forecaster, this question seems all too familiar, and any seasoned meteorologist can generally tell you a few things about the discrepancies between forecast models and the resulting weather conditions.

Being familiar with the general concept of complex systems and deterministic forecasting, the inherent challenges are clear. Yesterday and today, as I prepare the higher summits forecast, I reflect on the similarities between the two environments. I say this as someone with admittedly little familiarity with political forecasting and how prediction polls are compiled, but I understand the concept of modelling complex systems, and it’s here where I see some compelling similarities.

A big part of the challenge in modelling is trying to replicate what we describe as a chaotic system. Chaotic systems are those where we perhaps understand the basic interactions, but where describing the flow of the system on the whole becomes complex and difficult to predict. When we try to replicate these systems with a model, they exhibit a hypersensitivity to the initial conditions. Essentially, the farther we get from the real time data, the greater the discrepancy in our ability to forecast the system as a whole.

Take for example, the classic carnival (and price is right) game, plinko. We know that by dropping the ball into the labyrinth, when the ball hits a peg, it will go one of two ways. Sounds simple enough, right? But as we start to observe the trajectory of the ball, we find that a ball dropped in approximately the same spot will exhibit a wide range of different paths through the labyrinth. We understand that when the ball hits a peg, two potential outcomes occur, with the ball falling to one side or the other. Despite this basic determinism, as we continue to play plinko, we notice that the trajectory of the ball exhibits hypersensitivity to the initial conditions, a sensitivity that becomes more and more pronounced as the ball navigates the labyrinth.

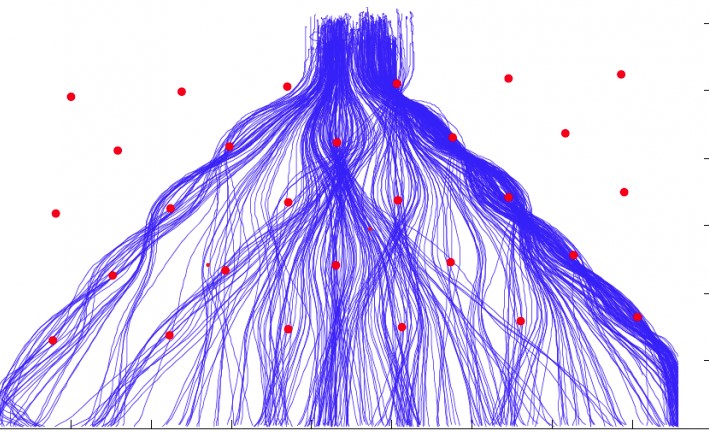

Modelling plinko runs in ensemble style…

We can see how the trajectories begin with a fair degree of certainty, but with time, that certainty disintegrates, yielding a large uncertainty.

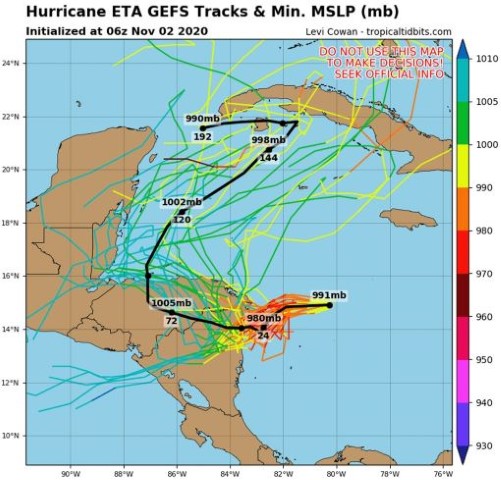

Modelling complex systems exhibit similar mathematical behavior, and weather models are no exception. Common examples of this are ensemble forecasts from hurricane models.

More recently, we can see a “spaghetti model” showing potential trajectories for Hurricane Eta from about a week ago. The storm is currently active off the southern tip of Florida.

Notice how the trajectories diverge and gain uncertainty with time.

Weather models, unlike our relatively simple plinko game, use a complex network of equations and parameterizations that attempt to replicate the dynamics of our atmosphere. Many large-scale models are fueled by some of the most powerful supercomputers in the world, and these models incorporate a large amount of in situ data that’s used in conjunction with the model physics and parameterizations to try to replicate our weather at a high resolution.

This conceptual knowledge is important to the human forecaster who is constantly trying to interpret the model outputs and communicate their uncertainty. Understanding this basic idea helps to clarify why forecasters are always trying to look at the most recent model runs, because we can see how uncertainty increases as we get further and further from the model’s initialization.

As a weather forecaster myself, I’m constantly looking at model outputs at work, so when I see the election polls and analysis, I find myself asking very similar questions about how political situations are being modelled. In politics, we see a network of voters, subject to categorical choices where decisions are made from complex array of variables. From here, I draw my questions… What type of data is being used to initialize this model? What exactly are the model physics and parameters when trying to replicate something as frivolous and whimsical as the human mind?

I recognize that these questions have tangible answers that lie outside the current scope of my knowledge, and I’m aware of this because of some basic conceptual knowledge of modelling. This allows me to recognize that even with something that seems fluid and unpredictable like an election, scientists of a different nature are at work sculpting a physics out of human voting tendencies.

With this in mind, I wonder where the limitations lie in election forecasts. In weather models, while our knowledge of the physics is improving, with models certainly getting updated and adjusted now and again, weather models are largely limited by computing power; our ability to account for and compile the smallest details in our complex earth system to a high resolution.

With election forecasting, I wonder if this is the case, or if we are somehow missing something in terms of the “physics” that I refer to. All models have their limitations and uncertainties, and I find myself trying my best to interpret and understand them, both in meteorology and politics alike.

Nate Iannuccillo, Weather Observer/Education Specialist